Abstract

Impaired musical rhythm abilities and developmental speech-language related disorders are biologically and clinically intertwined. Prior work examining their relationship has primarily used small samples; here, we studied associations at population-scale by conducting the largest systematic epidemiological investigation to date (total N = 39,358). Based on existing theoretical frameworks, we predicted that rhythm impairment would be a significant risk factor for speech-language disorders in the general adult population. Findings were consistent across multiple independent datasets and rhythm subskills (including beat synchronization and rhythm discrimination), and aggregate meta-analyzed data showed that non-linguistic rhythm impairment is a modest but consistent risk factor for developmental speech, language, and reading disorders (OR = 1.33 [1.18 – 1.49]; p < .0001). Further, cross-trait polygenic score analyses (total N = 7180) indicated shared genetic architecture between musical rhythm and reading abilities, suggesting genetic pleiotropy between musicality and language-related phenotypes.

Similar content being viewed by others

Introduction

Developmental communication-related difficulties and disorders are highly prevalent. In the U.S., for example, speech and language disorders collectively affect between 3% to 16% of all children1; and global estimates for expressive and receptive language problems are in a similar range (0.4–25%)2. Prevalences of these disorders are estimated as follows: language problems characteristic of developmental language disorder (DLD) at 3–7%3,4, dyslexia at 3–10%5, stuttering at 0.3–5.6%6, and speech disorders of articulation or phonology at 10%7 with higher prevalence in younger children (e.g., 15–16% at age 3)8. There are tremendous health and societal impacts of these disorders: individuals with developmental communication disorders are at increased risk for mental health disorders, poorer physical health, long-term issues with health-related quality of life9, and poorer educational and socioeconomic outcomes4,10,11,12,13,14,15. Despite their impact, many speech-language and reading disorders remain systematically under-identified16,17,18. Identifying risk factors for these disorders is therefore paramount for understanding, identifying, and treating them19. Notably, many of the current diagnostic and screening procedures are prone to inequitable outcomes based on demographic factors such as gender, socioeconomic status (SES), minority status, and language background (e.g., bilingualism)16,20.

Recent epidemiological approaches using big data suggest that leveraging co-occurring conditions and features (including medical comorbidities) of language disorders can be a pathway towards closing the identification gap, and towards a more complete profile of the biological and health implications of these conditions4,15,19,21,22,23. To this end, in the current paper, we explore musical rhythm skills as an underutilized but potentially powerful factor underlying developmental problems with speech-language abilities. While rhythm abilities are known to explain a portion of the inter-individual variability in several speech, language, and reading outcomes in typical development24, this research addresses open questions about the extent to which rhythm skills are related to speech-language disorders in large population samples, as a step towards providing a potentially clinically-relevant marker for early identification efforts. The Atypical Rhythm Risk Hypothesis - which posits that individuals with impairments in many different aspects of musical rhythm skills are at higher risk for developmental speech-language disorders25 - provides an epidemiological context for the present work.

Musical rhythm skills can be measured using a variety of tasks that require processing of timing and interval information in musical stimuli, such as accurately perceiving small differences between rhythms, extracting the underlying beat from rhythmic information, and synchronizing movements to external rhythms (e.g., tapping). Performance on isochronous tapping tasks is impaired in multiple speech-language disorders, including stuttering26,27, dyslexia28, and DLD29,30. Beat synchronization (tapping in time with a beat) is impaired in children with DLD29,30 and adults with dyslexia31. Despite rhythm and language skills being assessed with very different types of stimuli and tasks, a range of studies suggest that the biological underpinnings of rhythm and language are consistently linked24,25,32.

Transdiagnostic, dimensional approaches (e.g., Research Domain Criteria, or RDoC model) are currently gaining traction in psychiatric epidemiology33, but less so thus far in communication sciences and disorders research. Although speech-language disorders such as dyslexia, DLD, and stuttering exhibit distinct characteristic symptoms, there are commonalities across them, and they are often comorbid with each other34,35. Further, the high heterogeneity of symptoms in speech-language disorders makes identifying and agreeing upon distinct core deficits for each disorder challenging and less practical. Instead, modeled on the emerging practice of utilizing transdiagnostic criteria in other disciplines36,37, identifying possible behavioral and biological dimensions as co-occurring risk factors across disorders could bolster scientific and clinical insights. Dimensional approaches are also more useful for developing personalized treatments (e.g., through the characterization of disorder subtypes, or constellations of symptoms that practitioners can draw on when developing treatment plans)36. Using transdiagnostic approaches presents an opportunity and motivation to identify and deeply characterize potential non-linguistic risk factors and health correlates of language disorders, such as rhythm abilities.

The present work is also grounded in the Musical Abilities, Pleiotropy, Language, and Environment (MAPLE) framework, which posits that shared polygenic architecture (i.e., genetic pleiotropy) underlies a portion of the overlap between musicality traits (including rhythm abilities) and communication traits (including, speech, language, and reading abilities)24. In support of the MAPLE framework, positive genetic correlations between musical rhythm abilities and language/reading-related abilities, and negative genetic correlations between rhythm abilities and dyslexia, have been recently shown using findings from genome-wide association studies (GWAS) of rhythm abilities, language/reading abilities, and dyslexia32. Large-scale epidemiological approaches capturing continuous population distributions of rhythm and language skills, as well as the disorder ends of the spectrum, are an important test of these theories.

The current work investigated whether there is converging evidence that musical rhythm impairments are a risk factor for multiple developmental speech-language disorders, in the general population. We examined behavioral and genetic associations between musical rhythm abilities and communication-related problems and disorders (including those related to speech, language, and reading), using population health approaches. Guided by recent evidence-based frameworks24,25, we conducted two main studies using data from five different study cohorts. Study 1 assessed whether lower performance on musical rhythm measures is associated with higher odds of having developmental communication problems or disorders, using a retrospective design. Study 1 achieves a large sample (total N = 39,358) by utilizing data from population-based cohort studies in which both musical rhythm and communication-related traits/constructs were measured. Study 2 (N = 7180) investigated whether musical rhythm and communication traits share polygenic architecture, by combining phenotypic and individual-level genetic data, and drawing on group-level genomic results from recent GWASs of musical rhythm38 and language-related traits39.

Results

Study cohorts curated for epidemiological (Study 1) and genetic (Study 2) research questions are referred to throughout by the following names: (1) Vanderbilt Online Musicality Study; (2) Rhythm Perception Study; (3) Rhythm Production and Synchronization Study; (4) Lifelines; and (5) Adolescent Brain and Cognitive Development (ABCD). We investigated the genetic research questions in two of these cohorts: Vanderbilt Online Musicality Study and ABCD. Figure 1 illustrates the various phenotyping tools utilized to measure musical rhythm traits across the cohorts, including objectively measured and self-reported abilities. In total, these five cohorts represent data from 44,586 participants (of which 44,406 were retained in analyses for the present study). Four of the five cohorts were drawn from existing studies, and one cohort (Lifelines) involved new data collection (see Methods (Cohort Details)).

Distributions for rhythm scores in each cohort are visualized (right). Scores for all rhythm-related measures are normalized, where negative and positive scores indicate standard deviations below and above the mean respectively. Colors represent measures from the five different cohorts: reds = Vanderbilt Online Musicality Study; yellows = Adolescent Brain and Cognitive Development; blues = Rhythm Perception Study; greens = Rhythm Production & Synchronization Study; purples = Lifelines.

Study 1: Epidemiological results

In Study 1 (N = 39,358), we investigated whether relatively weaker musical rhythm scores are associated with higher odds of having a history of speech-language problems or disorders. We tested this hypothesis across four study cohorts, which collectively represented four different musical rhythm traits (see Methods (Cohort Details)). Figure 2 illustrates all association results, controlling for age and sex. Details of phenotypic measures related to musical rhythm abilities and speech-language difficulties/disorders are given in Methods (Cohort Details). Variable selection for models was theory-driven throughout. Rhythm measures were included as predictors, and speech-language measures were included as outcomes, to test our central hypotheses (see Methods (Data Processing and Analyses)). All Study 1 statistical models controlled for age and sex (Supplementary Data S.2.1–S.5.6).

Rows represent specific rhythm phenotypes assessed (e.g., beat synchronization accuracy), and Ns for cases and controls, within each speech-language problem or disorder assessed (e.g., dyslexia). The X-axis is represented on a logarithmic scale, relative to the vertical dashed line (OR = 1). Data represents the results of logistic regressions. Values to the right of the X-axis represent up to twice as high odds of having a history of speech-language difficulties or disorder per 1 SD lower scores for every rhythm phenotype. Values to the left represent up to half the odds. Gray diamond marker sizes represent either small (N < 1000), medium (between 1000 − 30,000), or large (N > 30,000) samples. Overall odds ratio (OR = 1.33 [1.18 – 1.49]; p = 6.07 × 10−66) represents results of a mixed effects meta-analysis of 16 logistic regression models, using REML estimations, and modeling nested random effects to account for heterogeneity across data cohorts and partially overlapping samples across datasets. Cohorts are identified using the following: OM = Vanderbilt Online Musicality Study; RP = Rhythm Perception Study; RPS = Rhythm Perception and Synchronization Study; LL = Lifelines.

Communication-related problems/disorders and musical rhythm abilities

History of Speech-Language Therapy

First, we investigated musical rhythm discrimination abilities, which capture perceptual sensitivity to auditory rhythms. A 1 SD decrease in rhythm scores was associated with 16% higher odds of having received speech-language therapy as a child, in the Vanderbilt Online Musicality Study (N = 2494; OR = 1.16 [1.03–1.30]; p = .010457) and 47% higher odds in the Rhythm Perception Study (N = 668; OR = 1.47 [1.12–1.94]; p = .005776). Second, we investigated self-reported rhythm abilities. A 1 SD decrease in self-reported rhythm scores was associated with 21% higher odds of having received speech-language therapy as a child, in the Rhythm Production and Synchronization Study (N = 1393; OR = 1.21 [1.04–1.41]; p = .014160). Next, in a subset of participants from the Rhythm Production and Synchronization Study, we investigated musical beat synchronization abilities. A 1 SD decrease in rhythm scores was associated with 72% higher odds of having received speech-language therapy as a child (N = 539; OR = 1.72 [1.36–2.18]; p = 5.18 × 10−6). Finally, in a second subset of participants from the Rhythm Production and Synchronization Study, we investigated isochronous tapping abilities. A 1 SD decrease in rhythm scores was associated with 53% higher odds of having received speech-language therapy as a child (N = 626; OR = 1.53 [1.23–1.88]; p = 8.22 × 10−5).

Speech or articulation problem/disorder and stuttering

First, we investigated associations between self-reported rhythm abilities and the odds of having a history of speech articulation problems or disorders. In Lifelines, 5.84% of the adult sample reported a history of speech/articulation problems or disorder. A 1 SD decrease in rhythm scores was associated with 21% higher odds of having a speech or articulation disorder (N = 31,172; OR = 1.21 [1.16–1.27]; p = 4.60 × 10−16). Next, we investigated associations with stuttering, also in Lifelines. 4.52% of the adult sample reported a history of stuttering. Results showed that a 1 SD decrease in rhythm scores was significantly associated with 7% lower odds of having a stutter (N = 34,798; OR = 0.93 [0.88– 0.98]; p = .0067) (Fig. 2). This was not the expected direction of association based on previous findings23,27,40,41.

Dyslexia and reading struggles

First, we investigated associations between musical rhythm discrimination abilities and a history of reading disorder. A 1 SD decrease in rhythm scores was associated with 41% higher odds of having received a dyslexia diagnosis, in the Vanderbilt Online Musicality Study (N = 2499; OR = 1.41 [1.14–1.72]; p = .00109). Second, we examined self-reported rhythm abilities and a history of reading disorder. A 1 SD decrease in rhythm scores was associated with 42% higher odds of having received a dyslexia diagnosis, in the Rhythm Production and Synchronization Study (N = 1398; OR = 1.42 [1.09 – 1.85]; p = .01033); and 30% higher odds in Lifelines (N = 31,864; OR = 1.30 [1.25 – 1.35]; p < 2.0 × 10−16). A 1 SD decrease in rhythm scores was also associated with 29% higher odds of having a history of reading struggles, in Lifelines (N = 32,321; OR = 1.29 [1.24–1.34], p < 2.0 × 10−16). In Lifelines, 9.31% of the adult sample reported a history of dyslexia, and 10.02% of the sample reported a history of reading struggles. In subsets of participants from the Rhythm Production and Synchronization Study, a 1 SD decrease in isochronous tapping accuracy was associated with 93% higher odds of having a dyslexia diagnosis (N = 626; OR = 1.93 [1.24–3.00]; p = .00305). Beat synchronization accuracy was not significantly associated with dyslexia (N = 538; OR = 1.45 [0.81–2.48]; p = .18114).

Developmental language disorder (DLD)

Here, we investigated associations between self-reported rhythm abilities and odds of reporting a history of DLD-related symptoms as measured by our DLD proxy variable, in Lifelines (see Methods (Cohort Details)). A 1 SD decrease in rhythm scores was associated with 22% higher odds of being identified as a DLD-likely case compared to DLD-not-likely controls (N = 35,069; OR = 1.22 [1.17–1.27]; p < 2.0 × 10−16). These results are consistent with previous work on relatively impaired rhythm abilities in children with DLD compared to typically developing children29,30. 7.43% of the adult sample was classified as a DLD-likely case.

Learning disabilities

Here, we investigated associations between self-reported rhythm abilities and a history of receiving support for learning disabilities, in Lifelines. 3.96% of the adult sample reported having a history of learning disabilities. A 1 SD decrease in rhythm scores was associated with 32% higher odds of learning disabilities (N = 34,374; OR = 1.32 [1.25–1.39]; p < 2.0 × 10−16).

Late talkers

Here, we investigated associations between musical rhythm discrimination abilities and late language emergence in the Rhythm Perception Study. Rhythm scores were not significantly associated with being a late talker (N = 545; OR = 1.11 [0.82–1.52]; p = .50), though the association trended in the expected direction.

Meta-analysis results

A mixed effects meta-analysis model using restricted maximum likelihood (REML) estimations showed that a 1 SD decrease in musical rhythm scores was associated with 1.33 times higher odds of reporting a history of speech and language problems or disorders (k = 16; OR = 1.33 [1.18–1.49]; p < .0001; Fig. 2; Supplementary Data S.1.1). Models included nested random effects to account for significant heterogeneity found across data cohorts (Q(df = 15) = 204.85; df = 15; p < .0001), and for partially overlapping samples across datasets. Since lower rhythm scores were associated with lower risk of stuttering (compared to other speech-language disorders, where lower rhythm scores were associated with higher risk), we conducted an additional meta-analysis without stuttering included. Meta-analysis results were virtually unchanged without stuttering included in the model (OR = 1.34 [1.18–1.48], p = 6.07 × 10−66); Supplementary Data S.1.2). These findings converge with previous large-scale evidence showing that individuals with time-based congenital amusia, which is characterized by impaired rhythm and timing perception in musical stimuli occurring in the absence of brain injury or hearing loss, have significantly higher rates of neurodevelopmental disorders and learning disabilities compared to controls, including dyslexia, speech disorder, dyscalculia, attentional disorder, memory problems, and spatial orientation difficulties42.

To investigate whether other relevant factors such as socioeconomic status (SES) and music engagement explain the relationship between musical rhythm and a history of speech-language problems and disorders, we also conducted regression analyses with music engagement and educational attainment (a proxy for SES) included as covariates, in two cohorts (Supplementary Data S.9.1–S.9.5). In brief, associations between musical rhythm abilities and speech-language disorders were robust over and above the effects of socioeconomic and music engagement factors.

Taken together, Study 1 findings were consistent with predictions of the Atypical Rhythm Risk Hypothesis. Across several large cohorts, we found that having weaker rhythm skills - measured through self-report and task performance - were associated with increased odds of clinical speech-language problems and disorders (with the exception of late talker status and stuttering). These results extend similar previous findings in smaller samples of individuals with specific developmental speech-language disorders (see ref. 25 for review).

Study 2: Genetic results

In Study 2 (N = 7180), we systematically tested genetic predictions of the MAPLE framework24, which proposes shared genetic architecture between rhythm and language traits, in two independent cohorts (Vanderbilt Online Musicality Study and ABCD). Polygenic score (PGS) analyses were conducted (see Methods (Data Processing and Analyses)), for which separate PGSs were computed in each of the two cohorts: (1) PGSs for musical beat synchronization abilities (based on a GWAS in 606,825 individuals38); (2) PGSs for word reading abilities (based on a GWAS in 27,190 individuals39). Details of GWAS discovery samples, polygenic scoring, and target samples for PGS application are given in (Methods (Cohort Details)). All Study 2 statistical models controlled for age, sex, and population substructure variation (Supplementary Data S.6.1–S.7.1).

Shared genetic architecture between reading abilities and musical rhythm abilities

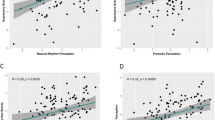

First, we investigated whether genetic predispositions for musical beat synchronization abilities are associated with language-related abilities (here, word reading). Beat synchronization PGSs were associated with higher oral word reading (i.e., decoding) scores, as measured by the Toolbox Oral Reading Recognition Test (T-OORT)43 (N = 5393; β = 0.05; SE = 0.01; p = .000107) in the ABCD study, including after controlling for the effects of word reading PGSs (β = 0.04; SE = 0.01; p = .00108). The tails of the beat synchronization PGS distribution are informative (Fig. 3A), with individuals in the highest PGS decile showing significantly better oral reading scores than those in the lowest decile (t (df = 1077) = − 3.69, p = .000232; see Methods (Data Processing and Analyses)). To contextualize the effect size of beat synchronization PGSs on word reading scores (1 SD increase in beat synchronization PGS corresponds to a 0.05 SD increase in reading scores), we draw readers’ attention to our findings that a 1 SD increase in word reading PGSs corresponds to a 0.14 SD increase in T-OORT word reading scores in the same ABCD cohort, after controlling for covariates (N = 5393; β = 0.14; SE = 0.01, p < 2.0 × 10−16). Genetic correlations between word reading and beat synchronization abilities (based on the same GWASs used here to derive PGSs) were recently reported32 to be rG = 0.18, SE = 0.04, p = 1.84 × 10−5.

Specifically, (A) shows oral reading scores as a function of beat synchronization PGSs in ABCD (N = 5393), where higher scores indicate better reading test performance and greater genetic predispositions for beat synchronization abilities, respectively; (B) shows musical rhythm scores as a function of word reading PGSs in the Vanderbilt Online Musicality Study (N = 1787), where higher scores indicate better rhythm test performance and greater genetic predispositions for word reading abilities, respectively; and (C) shows beat synchronization PGSs as a function of case (Yes) or control (No) status for speech-language therapy history in the Vanderbilt Online Musicality Study (N = 1669), where higher scores indicate greater genetic predisposition for beat synchronization abilities. For continuous phenotypes (A and B), means and SEs are shown for each decile of PGSs. For the binary phenotype (C) means, interquartile range (IQR), and total range of PGSs are shown for speech-language therapy cases (Yes) and controls (No). Box plots represent values between the first and third quartile (i.e., 25th to 75th percentile). Lower whiskers indicate values up to 1.5 * IQR below the first quartile, and upper whiskers indicate values up to 1.5 * IQR above the third quartile.

Next, we investigated whether genetic predispositions for word reading abilities are associated with musical rhythm discrimination abilities. Higher word reading PGSs were associated with significantly higher scores on the rhythm subtest of the Swedish Musical Discrimination Test44 (N = 1787; β = 0.09; SE = 0.025; p = .000579) in the Vanderbilt Online Musicality Study, including after accounting for the effects of rhythm PGSs in the model (β = 0.10; SE = 0.023; p = .00003). These effect sizes are similar to previously reported associations between beat synchronization PGSs and musical rhythm discrimination scores, e.g., in the Vanderbilt Online Musicality Study45: β = 0.11, p = 3.2 × 10−6; and the Study of Twin Adults: Genes and Environment (STAGE)46: β = 0.11, p < .001. Figure 3B illustrates mean scores on the musical rhythm discrimination task for each decile of word reading PGSs. Again, the tails of the word reading PGS distribution are informative (Fig. 3B), with individuals in the highest PGS decile showing significantly better rhythm discrimination scores than those in the lowest decile (t (df = 350) = − 2.56, p = .011; see Methods: Data Processing and Analyses).

Shared genetic architecture between speech-language problems/disorders and musical rhythm abilities

Here, we investigated whether genetic predispositions for musical beat synchronization abilities are associated with higher odds of having a history of speech-language disorders. Higher beat synchronization PGSs were associated with lower odds of reporting a history of speech-language therapy, in the Vanderbilt Online Musicality Study (N = 1669; OR = 0.83 [0.71 - 0.96]; p = .012). Figure 3C illustrates the difference in medians and distributions of beat synchronization PGS across the Yes (case) and No (control) responses for speech-language therapy history. A two-tailed independent samples t-test showed significantly lower beat synchronization PGS means in the cases (Yes: N = 218) compared to controls (No: N = 1456), t(df = 280) = −2.56, p = .0109); see Methods (Data Processing and Analyses)).

To clarify whether genetic associations between musical rhythm abilities and speech-language problems played out through horizontal (direct) or vertical (mediated) pleiotropy, we tested a mediation model in the Vanderbilt Online Musicality Study cohort (N = 1787 [NFemale = 1326]; see Methods (Data Processing and Analyses)). Path analysis showed significant direct effects of rhythm PGS on speech-language therapy history (i.e., history of speech-language problems), but this relationship was not mediated by rhythm discrimination abilities (chi-sq = 23.32, p = .000013, CLI = 1.0; TLI = 1.0). These results further clarify our cross-trait PGS findings, providing evidence that the genetic pleiotropy underlying rhythm and language traits is of the horizontal and not vertical variety (i.e., not mediated) (see ref. 25 for further discussion on possible unmediated and mediated genetic pleiotropy between rhythm and speech-language disorders). Figure 4 shows all beta and p-values for the mediation model. See Supplementary Data S.8.1–S.8.2 for model details.

The direct path between the predictor and the outcome was significant, but no significant mediation effect was found. Results suggest horizontal (unmediated) genetic pleiotropy between rhythm and language traits. To control for relevant covariates, PGSs for rhythm were measured using the residuals of beat synchronization PGSs regressed onto age, sex, and the first 5 genetic ancestry PCs; and rhythm discrimination abilities were measured using the residuals of scores on the Swedish Musical Discrimination Task (Rhythm Subtest) regressed onto age and sex. β estimates and p-values for each pair of variables are indicated on the paths. Solid lines indicate a significant relationship. * p < 0.05; ** p < 0.01.

Taken together, Study 2 results show that polygenic architecture for musical rhythm skills (here, beat synchronization) explain a proportion of variability in measured language-related skills (here, word reading), and that the size of this effect is meaningful given the extent to which polygenic architecture for word reading explains variability for the same trait (measured word reading abilities) in the same cohort. Further, the polygenic architecture of rhythm predicts reading scores over and above the predictive value of the known polygenic architecture of reading, suggesting that reading skills draw upon biology shared with musical rhythm skills.

Discussion

Consistent with the predictions of the Atypical Rhythm Risk Hypothesis25, our epidemiological investigations (Study 1; N = 39,358) found substantial converging evidence that rhythm impairments are a transdiagnostic risk factor for developmental communication-related disorders. We showed this in the largest sample size to date, and across four independent cohorts, with rhythm abilities (perception, production, and synchronization) measured in several different ways. Specifically, greater rhythm impairments were associated with increased odds of childhood history of speech-language therapy, speech or articulation disorders, dyslexia, reading struggles, DLD, and learning disabilities. Supplementary analyses in two cohorts showed that these associations were not driven by SES, or music training or engagement levels. Our retrospective design (compared to cross-sectional designs) captured adults’ history of speech, language, or reading struggles experienced at earlier developmental stages, even if such problems had later resolved.

Study 2 (N = 7180) tested theories of genetic pleiotropy (shared genetic influences) between musicality and language traits24,25,47 as a partial explanation of associations found in Study 1. Cross-trait PGS analyses showed genetic associations between rhythm and reading traits in two cohorts, consistent with predictions, and suggesting shared genetic architecture between these traits. Given the present study design, which yielded a relatively small number of cases for each disorder (consistent with the population prevalence for developmental speech-language disorders), we could not systematically tease apart whether genetic associations between rhythm and reading play out differently in individuals with a history of developmental speech-language problems compared to others. This remains a question for future studies that target the enrollment of individuals with a developmental history of speech-language problems into genomics studies. Mediation analyses showed direct effects of genetic predispositions for musical rhythm abilities on developmental speech-language history, which were not mediated by phenotypic musical rhythm scores (consistent with horizontal pleiotropy). Thus, associations between rhythm and language during development are not necessarily due to cognitive transfer effects from sequence and timing processing and learning, to linguistic domains (e.g., phonological and reading development). Rather, such associations may be driven by other complex gene-brain-environment interplays. These findings further characterize the details of genetic associations found between rhythm and speech-language disorders, complementing recent findings about shared genetic architecture32.

Taken together, our results highlight the importance of both genetic and behavioral individual differences in rhythm abilities as potential risk-resilience factors for communication disorders. Our results are consistent, and complementary, with a few different lines of research: (1) Our large-scale results on rhythm-dyslexia associations expand findings from prior case/control studies in modest samples, which show less accurate musical rhythm task performance in children and adults with dyslexia compared to controls48,49,50,51. (2) Our results complement prior multidisciplinary investigations showing shared development, neurobiology, and evolution of musicality and language-related traits32,52,53,54,55. (3) Our prevalence estimates for DLD, stuttering, and dyslexia are consistent with prior estimates, and we provided a novel estimate of 10% prevalence for reading struggles.

Our results diverge from previous studies in that rhythm and stuttering were not associated in the direction expected based on the literature. This could be due to our focus on individual variability in rhythm skills within individuals who stutter(ed), in contrast with case/control designs27,40,41; or because individuals who stutter(ed) may be exposed to rhythm-focused therapies, improving their rhythm abilities. While we cannot test this conjecture in the present data, we suggest it as a potential explanation that should be tested systematically in future research, as there is a substantial history of rhythmic speech or singing and other music therapies being used to treat stuttering56,57. Future studies should examine details about the duration and type of therapy received, to improve our understanding of whether rhythm-based interventions could have long-term effects on speech-language disorder traits (as they have mostly been examined previously for their effects on more temporary states58).

We argue based on current results and prior research that musical rhythm abilities can be considered a relevant transdiagnostic dimension or domain for developmental speech-language disorders, as previously proposed25,47. Transdiagnostic approaches such as the RDoC model, which has been used previously to frame research in psychiatric disorders36,37, are particularly useful here, given high comorbidity between distinct speech-language and reading disorders34. Transdiagnostic domains based on rhythm could include perception, production, and synchronization skills. Further, the present work highlights rhythm impairment as a clinically-relevant risk factor, alongside other commonly acknowledged or yet-to-be determined factors. Accordingly, the development and validation of musical rhythm based screening tools could enhance current efforts for identifying children at-risk for developmental speech-language disorders, especially individuals who are missed by current efforts16,17,18,20 (see ref. 58 for an extended discussion of rhythm screeners for developmental speech-language disorders). Other relevant domains for speech-language disorders may include sensorimotor, cognitive, or social processes that overlap to some extent with each other, and with rhythm abilities.

The current study has a few limitations. First, the number of cases was modest within certain cohorts and statistical models (e.g., 10–13 Dyslexia cases in RPS models; 43 late talkers in the RP model; Fig. 2), making these models statistically underpowered. Conversely, cases were very well represented in several other models (e.g., 2713 Dyslexia cases and 2424 DLD cases in LL models). Second, the present data do not allow us to systematically investigate whether a history of particular therapies or interventions (e.g., for stuttering, or history of speech-language therapy) influenced the phenotypic and genetic relationships found here. Future studies should examine whether childhood speech-language therapy can have lasting effects on musicality-language associations. As is common in the literature, PGS models were derived from currently existing GWAS summary statistics from studies in individuals with European genetic ancestry, potentially limiting their overall generalizability. Future work should include more diverse genetic ancestries59,60, and musicality phenotypes across diverse cultural groups61.

Based on the current data, we cannot directly assess how clinically impactful these insights about rhythm skills as a correlate or risk factor for developmental speech-language disorders will be, relative to other known predictors and dimensions. Our results suggest shared genetic influences, with potential downstream effects on shared neurobiology, aligning with previous theoretical work. These insights could have future clinical value beyond the correlations observed here, including for precision medicine approaches which rely on better understanding individual differences. Therefore, future efforts in building and sharing large-scale epidemiological and genomic data focused on communication-related traits are important to expand the current evidence base.

This work lays the foundation for future investments by the communication sciences and disorders research community (among others) in studying musical rhythm skills. Individual differences in rhythm abilities (and future screening tools based on rhythm) may identify a substantial proportion of children with developmental speech-language disorders that are currently being missed. Future directions could also include longitudinal investigations of the role of rhythm in language outcomes across development, for example, examining the role of predispositions for rhythm traits across different inflection points known to be important for the development of temporal and motor processing, language skills, and domain-general cognitive mechanisms (e.g., executive function). Such efforts could enable transdiagnostic and dimensional approaches to advancing personalized medicine (e.g., through identifying certain dimensions that better predict treatment response), paving the way for improved treatment outcomes and quality of life for those living with communication-related disorders.

Methods

Results reported here draw on data from five study cohorts including the Vanderbilt Online Musicality Study; Rhythm Perception Study; Rhythm Production and Synchronization Study; Adolescent Brain and Cognitive Development Study; and Lifelines. Cohort details are described below, along with details on data processing and analyses. All relevant ethical guidelines, regulations, and data protections were adhered to throughout, and all data collection (both primary and secondary) received approval from an ethics committee of the institutional review board, as detailed for each cohort below.

Cohort details

Vanderbilt online musicality study

The Vanderbilt Online Musicality Study45,62 investigated the genomics of musicality, including musical rhythm perception and music engagement, as well as relationships between musicality and speech-language traits. Procedure and sample details for the current study are given below.

Participants and Procedures

The present study analyses included N = 2535 participants (1833 females); aged 18 - 88 (MeanAge = 42.27 years; SD = 15.81 years) of the original study cohort for phenotypic analyses; and N = 1792 participants for genetic analyses (1326 females); aged 18–88 (MeanAge = 44.96 years; SD = 16.23 years). Participants completed an online battery of surveys and behavioral tests administered online (internet-based) through Research Electronic Data Capture (REDCap) software, a secure web platform for building and managing research databases and surveys63. These included a brief musical engagement questionnaire, a brief headphone test, two auditory tasks (a musical rhythm task and a speech rhythm task), and a demographic questionnaire. A subset of participants consented to DNA extraction from mailed-in saliva samples. All procedures, including the informed consent forms, were approved by the Vanderbilt Institutional Review Board. Speech rhythm task data are reported elsewhere and were not analyzed in the current study62.

Measures

Swedish musical discrimination test (rhythm subtest). Participants were asked to listen to 18 pairs of rhythmic sequences and decide whether the sequences were the same or different. Each rhythmic sequence comprised brief sine tones of 60 ms duration each (500 Hz; inter-onset intervals: 150, 300, 450, or 600 ms). Each sequence in a pair of rhythms was separated by a 1 s gap. Participants only heard each pair once before making their same/different decision. The test included 2 practice trials and an attention check item interspersed with test trials. The task yielded rhythm discrimination scores from 0 to 18, which were standardized (z-scored) prior to logistic regressions, and inverse-rank transformed prior to linear regressions for the analyses reported here, to mitigate skews in the data (consistent with prior studies44) and meet the assumptions of normality of residuals.

Self-reported history of speech-language therapy. Participants were asked a single-item question, “Did you receive speech language therapy as a child?”, with response options “Yes”, “No”, or “I don’t know”. “I don’t know” responders (N = 28) were excluded from models.

Self-reported history of dyslexia. Participants were asked a single-item question, “Have you ever been diagnosed with dyslexia?”, with response options “Yes”, “No”, or “I don’t know”. Those who responded “I don’t know” (N = 19) were excluded from the models.

Polygenic scores (PGSs) for word reading abilities. PGSs are weighted sums of the estimated effects of a large number of genetic variants on a specific phenotype, with the weights derived from a GWAS. In the present study, we derived PGSs for word reading in our target sample (Vanderbilt Online Musicality Study participants), based on models derived from a GWAS of measured oral reading (i.e., reading words aloud) performance in a discovery sample of N = 27,180 individuals of European genetic ancestries, meta-analyzed across 18 cohorts39. PGSs for individual participants were computed using PRS-CS software64 with default parameters. Once PGSs were derived for each participant, they were standardized (z-scored) within each genetic ancestry group, consistent with the approach previously used in the Vanderbilt Online Musicality Study45. This approach improves the application of PGS models to mismatched-ancestry target samples (useful here due to the presence of more diverse genetic ancestries represented in our target sample compared to the discovery sample).

Polygenic scores (PGSs) for beat synchronization abilities. PGSs for beat synchronization abilities utilized in the present analyses were previously generated as part of the Vanderbilt Online Musicality Study. Beat synchronization PGSs were derived from a large GWAS of beat synchronization skills38, using PRS-CS software64 with default parameters.

Rhythm perception study

The Rhythm Perception Study38 (referred to in ref. 38 as Phenotype Experiment 1) aimed to determine whether self-reported rhythm measures (specifically, responses to the question “can you clap in time with a musical beat?”) were correlated with task-based rhythm perception measures. This study was part of a larger GWAS of musical rhythm abilities, specifically of beat synchronization38. Procedure and sample details for the current study are given below.

Participants and procedures

The study sample consisted of N = 724 participants (333 females), aged 18–73 years old (MeanAge = 36.1 years; SD = 10.9 years), who were recruited anonymously in Amazon’s Mechanical Turk.

Measures

Self-reported history of speech-language therapy. Participants were asked the single-item question, “Did you receive speech language therapy as a child?”, with response options “Yes”, “No”, or “I don’t know”. Those who responded “I don’t know” (N = 16) were excluded from the models.

Self-reported late talker status. Participants were asked the single-item question “Were you a late talker?”, with response options “Yes”, “No”, or “I don’t remember”. Those who responded “I don’t remember” (N = 147) were excluded from models.

Beat-based advantage (BBA). The BBA is a 32-item musical rhythm perception task. On each trial, participants heard three rhythms (separated by 1500 ms of silence), and decided whether the third rhythm was the same or different from the first two (which were always identical). On a randomized half of the trials, ‘simple’ or beat-based rhythms were presented, and on the other half, ‘complex’ or syncopated rhythms were presented (usually more challenging to discriminate). Each rhythm was presented using pure tone stimuli in one of six frequencies (294, 353, 411, 470, 528 and 587 Hz, selected at random) and one of four durations (interstimulus interval of 220, 230, 240 and 250 ms). The task yields a raw accuracy score, from which d’ scores (ratio between hits and misses) were computed, as derived from signal detection theory65 and consistent with the approach used previously to analyze BBA data66,67. d’ scores were used as the dependent variable in all analyses. For more information on rhythmic stimuli included in the BBA, see ref. 38 (Phenotype Experiment 1).

Rhythm Production and Synchronization Study

The Rhythm Production and Synchronization Study38 (referred to as Phenotype Experiment 2 in ref. 38) aimed to determine whether a self-reported beat synchronization measure (i.e., “can you clap in time with a musical beat?”) is a valid proxy for objectively measured beat synchronization ability. Further, the study aimed to explore behavioral associations between rhythm/beat synchronization and assorted traits found to be genetically correlated with beat synchronization. The study was pre-registered through the Open Science Framework (https://osf.io/exr2t) in July 2020, prior to data collection. Sample characteristics and study procedures for the current study are given below.

Participants and procedures

This internet-based study consisted of a beat synchronization task to assess the accuracy of participants’ tapping in time with musical excerpts, and a series of questionnaires assessing self-reported rhythm, music engagement, selected health traits, confidence as a personality trait, and demographics. A total of N = 1412 participants (728 female), aged 18–77 (MeanAge = 36.34; SD = 11.93), were included in this study, a subset of whom also had data for the isochronous tapping task (N = 628) and a further subset of whom had data for the beat synchronization task (N = 540). Tapping responses were measured using the Rhythm Experiment Platform (REPP)68, a robust cross-platform solution for measuring sensorimotor synchronization in online experiments that has high temporal fidelity and can work efficiently using hardware and software available to most participants.

Measures

Isochronous tapping task. This task served as practice trials for the musical beat synchronization task. It consisted of four 15 s trials of isochronous tapping to a metronome beat (two trials with an inter-onset interval of 500 ms and two trials with an inter-onset interval of 600 ms). Participants were included in analyses if they had at least one valid trial per inter-onset interval (i.e., 500 ms or 600 ms). The task yields a measure of tapping asynchrony, therefore, lower scores indicate better beat synchronization accuracy. Tapping asynchrony was therefore reverse-coded for analyses to remain consistent with the direction of all other rhythm phenotypes, i.e., higher scores indicate better rhythm performance (see ref. 68 for more details on the implementation of the practice phase).

Musical beat synchronization abilities. Following the practice phase (isochronous tapping), the participants were presented with the main beat synchronization task consisting of eight trials (four musical excerpts, each played twice), with each trial being 30 s long. The order of presentation of the practice trials and test trials was randomized for each participant. Beat synchronization abilities were measured through a beat synchronization task. Participants heard a song and were asked to tap to the musical beat until the music ended. To help participants find the beat, a metronome marking the beats in the first 11 s of the clip was added to the stimulus. After the metronome stopped, participants were instructed to continue tapping to the same beat at a steady pace (see ref. 68 for more details and validation of the beat synchronization task). Tapping responses were measured through REPP68.

Self-reported rhythm abilities. Participants responded to 7 questions about self-reported rhythm abilities, which were then aggregated into an overall score. The composite score taps into several self-reported interactions with rhythmic stimuli including beat synchronization abilities, importance of rhythmic ability to identity, struggles with rhythm perception, and urge to groove or move to a beat.

Adolescent brain cognitive development (ABCD) study

The ABCD study is the largest long-term study of brain development and child health ever conducted in the United States (US). Coordinated data collection is ongoing across 21 sites in the US, aiming to follow a cohort of 11,500 nine- and- ten-year old children, and their parents/guardians, from pre-adolescence to young adulthood (for a total of ten years). ABCD study data comprises genetic data and other biospecimens; a comprehensive set of phenotypes spanning the following domains: physical health, mental health, brain imaging, biospecimens, neurocognition, substance use, and culture and environment. ABCD genetic and phenotypic data used in the present study were obtained from the open-access National Institute of Mental Health (NIHM) Data Archive (https://data-archive.nimh.nih.gov/abcd). The ABCD cohort also consists of data from 1720 twins. Participating children and families were largely recruited through US school systems, optimizing for diversity and representativeness of the national population on dimensions such as gender, race and ethnicity, SES, and urbanicity. For more information on recruitment and overall design of the ABCD study, see ref. 69 for single-birth children; and70 for twins.

Participants

N = 5460 participants of European genetic ancestry (2571 females; MeanAge = 9.46 years; SD = 0.54 years) were included in the study, after applying genetic quality control (QC) protocols to open-access imputed genetic data at the individual level (see Supplementary Information for ABCD QC details). The sample included from ABCD consists of individuals for whom genetic data passed QC, and for whom information for age, sex, and reading scores were available.

Measures

Polygenic scores (PGSs) for beat synchronization. Similar to the PGS models for beat synchronization derived for Vanderbilt Online Musicality Study participants45, we derived PGSs for musical beat synchronization in ABCD participants (our target sample here) from the beat synchronization GWAS38, using PRS-CS models64.

PGS for word reading. We derived PGSs for word reading for ABCD participants (our target sample) from a leave-one-out version of a meta-GWAS of word reading39 that excluded the ABCD cohort.

NIH Toolbox oral reading recognition test (T-ORRT). The T-ORRT is a standardized assessment of reading decoding skills that is part of the NIH Toolbox Cognition Battery43. In this test, participants are asked to read aloud letters and words, pronouncing them as accurately as possible. This standardized test of oral reading ability yields an uncorrected as well as an age-adjusted score. Uncorrected T-OORT scores (i.e., not adjusted for age norms) were used in all present analyses, since age was included as a covariate in all models. The task yielded reading scores from 67 to 119, which were inverse-rank transformed prior to linear regressions for the analyses reported here, to mitigate skews in the data and meet the assumptions of normality of residuals.

Lifelines

Lifelines is a multi-disciplinary prospective population-based cohort study examining, in a unique three-generation design, the health and health-related behaviors of 167,729 persons living in the North of the Netherlands. It employs a broad range of investigative procedures in assessing the biomedical, socio-demographic, behavioral, physical and psychological factors which contribute to the health and disease of the general population, with a special focus on multi-morbidity and complex genetics. Design and sample characteristics of the Lifelines study have been previously detailed71,72.

Participants

In September 2022, the Speech, Language and Musicality questionnaire was circulated among 101,000 Lifelines participants of 18 years and older. A total of 35,179 Lifelines participants (21,328 females and 13,851 males; aged 18–96 years) completed the questionnaire in September or October 2022. The general Lifelines protocol has been approved by the University Medical Center Groningen medical ethical committee (2007/152), and the Speech, Language and Musicality study was reviewed by the medical ethical committee of the Erasmus Medical Center (MEC-2022-0313). All participants gave informed consent prior to participating in Lifelines and prior to taking part in the survey.

Measures

All questions were asked in Dutch; English translations of questions and answer options are given below. For original questions (in Dutch), see Supplementary Data S.9.6.

Self-reported rhythm abilities. Participants were asked two items about self-reported rhythm abilities, which were composited into an overall score for self-reported rhythm. Included items were: “I can tap in time to a musical beat” and “I struggle to feel the rhythm when listening to, playing, or dancing with music”, which could be answered using a seven-option Likert scale ranging from “completely disagree” to “completely agree”. The final composite score ranged from 2 to 14, with a higher score indicating better self-reported rhythm ability.

Self-reported dyslexia. The presence of dyslexia was examined using the question “People with dyslexia read very slowly and/or have a lot of difficulty with spelling. Do you have dyslexia?”. Participants who answered “Yes, I have been diagnosed with dyslexia” and “I presume I have dyslexia, but I have never been tested” were considered dyslexic (N = 2719), those who answered “No” were considered to not have dyslexia (N = 29,234), and those who answered “No, I suppose not” were excluded (N = 3175).

Self-reported stuttering. Presence of stuttering at present or in the past was examined using the question, “People who stutter have difficulty with pronouncing words fluently. For example, they repeat syllables or words, linger on sounds and/or block while talking. Have you ever stuttered, or do you currently stutter?”. Participants were included as a person who stutters or stuttered if they answered one of the following: “Yes, I stuttered as a child, but I do not stutter anymore”; “Yes, I started stuttering as a child and I still have a stutter”; “Yes, as a young person/adult I stuttered for a while, but I do not stutter anymore” or “Yes, I started stuttering as a young person/adult and I still stutter”, and if they also reported stuttering onset before 20 years of age (N = 1510). Those who answered “No, I have never stuttered” were considered non-stuttering controls (N = 33,385). People who reported an age of onset of stuttering at or later than 20 years of age (N = 61), a duration of maximally one year (N = 160), or did not report an age of onset (N = 32), were excluded from analyses.

Self-reported speech or articulation problem. Presence of a speech or articulation problem was examined using the question “People with a speech or articulation problem may for example, lisp, have an unclear pronunciation and/or use the wrong sounds. Do you have or did you have a speech or articulation problem?”. Participants who answered “Yes, I have had a speech or articulation disorder in the past” and “Yes, I still have a speech or articulation disorder” were considered to have a speech or articulation problem (N = 1727), those who answered “No” were considered to not have a speech or articulation problem (N = 29,536), and those who answered “No, I suppose not” were excluded (N = 3877).

Self-reported learning disability. The presence of a learning disability was examined using the question “Did you receive extra support in school for a learning disability?”. Participants who answered “Yes” were considered to have a learning disability (N = 1314), those who answered “No” were considered to not have a learning disability (N = 33,159), and those who answered “I’m not sure” were excluded (N = 653).

Self-reported reading problems. The presence of reading problems during primary education was examined using the question “Did you struggle in primary school with learning to read, compared to your peers?”. Participants who answered “I struggled more” or “I struggled much more” were considered to have a reading problem (N = 2954), participants who answered “I struggled much less”, “I struggled less” or “Similar to my peers” were considered not to have a reading problem (N = 29,469), and those who answered “I do not know (anymore)” were excluded (N = 2756).

DLD proxy. Since DLD usually requires a formal diagnosis by a speech-language pathologist, direct ascertainment of DLD is less feasible in large-scale data cohorts and epidemiological efforts. Researchers have therefore employed creatively designed approaches for developing DLD proxies, including assigning cut-off scores for standardized language measures73; machine-learning techniques for classifying DLD cases15,74, or thresholds based on self-reported speech and language measures4 similar to the approach we used here. Our DLD proxy incorporated many key symptoms of DLD, namely consistent and prominent difficulties with spoken expression, written expression, and word finding, based on self-report items. DLD was inferred through three self-report questions: (1) “Do people correct your writing because of grammar or spelling mistakes?”; (2) “Do you have a hard time finding the right words or making your sentences express what you want to say?”; and (3) “How often do you have words ‘on the tip of your tongue’ or word finding problems?”. Responses to these questions were on a four-option scale (“very often/all the time”, “somewhat often/more often than most people I know”, “occasionally/about as often as most people”, and “rarely”). A binary DLD proxy score was derived to enable comparison with odds ratios across other research questions and phenotypes. This process yielded two response statuses: (a) DLD likely (cases) and (b) DLD not likely (controls). Participants were assigned to DLD likely (case) status (N = 2426) if they responded with the lowest score (experiences difficulties with language “very often”) on at least one of three domains of difficulty (i.e., written expression; spoken expression; word finding); in addition to responding with the second lowest score (experiences difficulties with language “somewhat often”) on at least two of the three domains. All other participants (N = 32,753) were assigned to the DLD not likely status (controls).

Data processing and analyses

All analyses were conducted using R (v. 4.2.3). Specific R packages, functions, and specifications are mentioned below (italicized).

Data transformations

Data from all cohorts were z-scored for plotting distributions (Fig. 1). Isochronous tapping data (RPS cohort) was winsorized to within +/− 3 SDs using winsorize {DescTools 0.99.48}, to correct for high kurtosis. Winsorized data for isochronous tapping were used in logistic regression models, meta-analysis, and in the forest plot (Fig. 2).

Continuous phenotypic variables (i.e., musical rhythm discrimination scores: OM; oral word reading scores: ABCD) were inverse rank normalized to meet the assumption of normality of residuals in linear regression models (Fig. 3, panels A and B). Residuals for musical rhythm discrimination scores (Fig. 3, panel B) were first extracted from a linear model with word reading PGSs as the predictor, and age, sex, and the first 5 genetic ancestry PCs as covariates. Linear models were fit using lm {stats 4.2.3}. Residuals were ranked using rank {base 4.2.3}, with specifications for tied values to be assigned in random order. Ranked residuals were then normalized using norm {base 4.2.3}. Oral word reading scores (Fig. 3, panel A) were also inverse rank normalized. Inverse rank normalized musical rhythm discrimination and oral word reading scores were used in linear regression models.

Phenotypic analyses

Logistic regression models were tested using glm {stats 4.2.3}, with model family specified as “binomial”. In each model, rhythm phenotypes were predictors; speech-language problem or disorder phenotypes were outcome variables (binary coded for case-control status); and age and sex were included as covariates (See Supplementary Data S.2.1–S.5.6 for additional details). Individuals who could not be coded as either cases or controls (e.g., “I don’t know”; “I’m not sure”) were excluded listwise from models. Similarly, individuals who could not be coded as either male or female sex were excluded listwise from models. Lower and upper confidence intervals (based on 95% confidence level) were determined using R confint {stats 4.2.3}.

Meta-analysis

A nested linear mixed-effects meta-analysis model was tested using R metafor 4.0 (Viechtbauer, 2020), using REML model specifications. ORs, SEs, and p-values derived from the logistic regression models were used as inputs for the meta-analysis. Data was nested to account for sample overlaps in the data (e.g., within the RPS cohort, beat synchronization accuracy was measured in a subset of the participants for whom isochronous tapping accuracy was measured, which in turn was measured in a subset of participants in which self-reported rhythm abilities were measured). Details of the nesting structure are given in Supplementary Data S.1.3. Meta-analysis results (meta OR, confidence intervals, and p-values) were visualized using R forestploter 1.1, alongside ORs, confidence intervals, and p-values for each logistic regression model tested (Fig. 2 and Supplementary Data S.1.1 and S.1.2).

Polygenic score analyses

Linear regression models were tested using lm {stats 4.2.3}. Models included either musical rhythm discrimination or oral word reading scores as outcome variables; either word reading and/or beat synchronization PGSs as predictors; and age, sex, and up to 6 genetic ancestry PCs as covariates (see Supplementary Data S.6.1, S.6.2, S.7.1–S.7.3 for further detail on the models). An additional logistic regression model (in OM data) was tested using glm {stats 4.2.3}, with model family specified as “binomial”. Beat synchronization PGSs was the predictor; speech-language therapy history status was the outcome; age, sex, and 5 genetic ancestry PCs were included as covariates (See Supplementary Data S.6.3 for further details of the models). Individuals who could not be coded as either cases or controls (e.g., “I don’t know”; “I’m not sure”) were excluded listwise from models. Similarly, individuals who could not be coded as either male or female sex were excluded listwise from models. Lower and upper confidence intervals (based on 95% confidence level) were determined using R confint {stats 4.2.3}.

Rhythm-language mediation model

The model (in OM cohort data) was tested using the lavaan 0.6.18 package (Rosseel, 2012) with default model specifications, with beat synchronization PGSs as the predictor, musical rhythm discrimination scores as potential mediators, and speech-language therapy history status as the outcome variable (see Supplementary Data S.8.1 and S.8.2 for further details on the model). Residuals for musical rhythm discrimination scores were extracted from a linear model. Covariates were age, sex, and 5 genetic ancestry PCs.

Reporting summary

Further information on research design is available in the Nature Portfolio Reporting Summary linked to this article.

Data availability

Secondary data used in this study for the Vanderbilt Online Musicality Study, the Rhythm Perception Study, and the Rhythm Production Study are available publicly at https://osf.io/myxgk. Data collected or generated for this study includes data in the Lifelines cohort, and derived data in the ABCD cohort, for which individual-level data is restricted based on a Lifelines Data (and Material) Transfer Agreement and ABCD Data Use Certificate. Access to Lifelines data is available to authorized users by making an application through https://www.lifelines-biobank.com/. Data from the ABCD study can be obtained to users with a valid Data Use Certificate at the NIMH Data Archive (https://nda.nih.gov/. Source data underlying all figures, where unrestricted by data use agreements, are available as Source Data Files. Source data are provided in this paper.

Code availability

The code used to analyze data is available at https://osf.io/myxgk/.

References

Committee on the Evaluation of the Supplemental Security Income (SSI) Disability Program for Children with Speech Disorders and Language Disorders et al. Speech and Language Disorders in Children: Implications for the Social Security Administration’s Supplemental Security Income Program. (National Academies Press (US), Washington (DC) 2016).

Hill, E. et al. Low language capacity in childhood: A systematic review of prevalence estimates. Int. J. Lang. Commun. Disord. 59, 124–142 (2023).

Bishop, D. V. M., Snowling, M. J., Thompson, P. A. & Greenhalgh, T. & and the CATALISE-2 consortium. Phase 2 of CATALISE: a multinational and multidisciplinary Delphi consensus study of problems with language development: Terminology. J. Child Psychol. Psychiatry 58, 1068–1080 (2017).

Nudel, R. et al. Developmental language disorder - a comprehensive study of more than 46,000 individuals. Psychiatry Res. 323, 115171 (2023).

Snowling, M. J. Early identification and interventions for dyslexia: a contemporary view. J. Res Spec. Educ. Needs 13, 7–14 (2013).

Yairi, E. & Ambrose, N. Epidemiology of stuttering: 21st century advances. J. Fluen. Disord. 38, 66–87 (2013).

Bishop, D. V. M. Which neurodevelopmental disorders get researched and why?. PLoS ONE 5, e15112 (2010).

Campbell, T. F. et al. Risk factors for speech delay of unknown origin in 3-year-old children. Child Dev. 74, 346–357 (2003).

Feeney, R., Desha, L., Ziviani, J. & Nicholson, J. M. Health-related quality-of-life of children with speech and language difficulties: a review of the literature. Int. J. Speech Lang. Pathol. 14, 59–72 (2012).

Clegg, J., Hollis, C., Mawhood, L. & Rutter, M. Developmental language disorders–a follow-up in later adult life. Cognitive, language and psychosocial outcomes. J. Child Psychol. Psychiatry 46, 128–149 (2005).

Conti-Ramsden, G., Durkin, K., Toseeb, U., Botting, N. & Pickles, A. Education and employment outcomes of young adults with a history of developmental language disorder. Int. J. Lang. Commun. Disord. 53, 237–255 (2018).

Gialluisi, A. et al. Genome-wide association study reveals new insights into the heritability and genetic correlates of developmental dyslexia. Mol. Psychiatry 26, 3004–3017 (2021).

Klein, J. F. & Hood, S. B. The impact of stuttering on employment opportunities and job performance. J. Fluen. Disord. 29, 255–273 (2004).

Law, J., Rush, R., Schoon, I. & Parsons, S. Modeling developmental language difficulties from school entry into adulthood: literacy, mental health, and employment outcomes. J. Speech Lang. Hear. Res. 52, 1401–1416 (2009).

Nitin, R. et al. Association of developmental language disorder with comorbid developmental conditions using algorithmic phenotyping. JAMA Netw. Open 5, e2248060 (2022).

McGregor, K. K. How we fail children with developmental language disorder. Lang. Speech Hear. Serv. Sch. 51, 981–992 (2020).

Winters, K. L. & Byrd, C. T. Pediatrician referral practices for children who stutter. Am. J. Speech Lang. Pathol. 29, 1404–1422 (2020).

Barbiero, C. et al. The lost children: The underdiagnosis of dyslexia in Italy. A cross-sectional national study. PLoS ONE 14, e0210448 (2019).

Raghavan, R. et al. Population health in pediatric speech and language disorders: Available data sources and a research agenda for the field. J. Speech Lang. Hear. Res. 61, 1279–1291 (2018).

Bedore, L. M. & Peña, E. D. Assessment of bilingual children for identification of language impairment: Current findings and implications for practice. Int. J. Biling. Educ. Biling. 11, 1–29 (2008).

Shaw, D. M. et al. Phenome risk classification enables phenotypic imputation and gene discovery in developmental stuttering. Am. J. Hum. Genet. 108, 2271–2283 (2021).

Doust, C. et al. Discovery of 42 genome-wide significant loci associated with dyslexia. Nat. Genet. 54, 1621–1629 (2022).

Polikowsky, H. G. et al. Large-scale genome-wide analyses of stuttering. Nat. Genet. 57, 1835–1847 (2025).

Nayak, S. et al. The musical abilities, pleiotropy, language, and environment (MAPLE) framework for understanding musicality-language links across the lifespan. Neurobiol. Lang.3, 615–664 (2022).

Ladányi, E., Persici, V., Fiveash, A., Tillmann, B. & Gordon, R. L. Is atypical rhythm a risk factor for developmental speech and language disorders?. Wiley Interdiscip. Rev. Cogn. Sci. 11, e1528 (2020).

Olander, L., Smith, A. & Zelaznik, H. N. Evidence that a motor timing deficit is a factor in the development of stuttering. J. Speech Lang. Hear. Res. 53, 876–886 (2010).

Falk, S., Müller, T. & Dalla Bella, S. Non-verbal sensorimotor timing deficits in children and adolescents who stutter. Front. Psychol. 6, 847 (2015).

Thomson, J. M. & Goswami, U. Rhythmic processing in children with developmental dyslexia: auditory and motor rhythms link to reading and spelling. J. Physiol. Paris 102, 120–129 (2008).

Corriveau, K. H. & Goswami, U. Rhythmic motor entrainment in children with speech and language impairments: tapping to the beat. Cortex 45, 119–130 (2009).

Cumming, R., Wilson, A., Leong, V., Colling, L. J. & Goswami, U. Awareness of rhythm patterns in speech and music in children with specific language impairments. Front. Hum. Neurosci. 9, 672 (2015).

Thomson, J. M., Fryer, B., Maltby, J. & Goswami, U. Auditory and motor rhythm awareness in adults with dyslexia. J. Res. Read. 29, 334–348 (2006).

Alagöz, G. et al. The shared genetic architecture and evolution of human language and musical rhythm. Nat. Hum. Behav. 9, 376–390 (2025).

Fried, E. I. et al. Mental disorders as networks of problems: a review of recent insights. Soc. Psychiatry Psychiatr. Epidemiol. 52, 1–10 (2017).

Pennington, B. F. & Bishop, D. V. M. Relations among speech, language, and reading disorders. Annu. Rev. Psychol. 60, 283–306 (2009).

Leonard, L. B. Children with Specific Language Impairment. (MIT Press, 2014).

Cuthbert, B. N. The RDoC framework: facilitating transition from ICD/DSM to dimensional approaches that integrate neuroscience and psychopathology. World Psychiatry 13, 28–35 (2014).

Sauer-Zavala, S. et al. Current definitions of ‘Transdiagnostic’ in treatment development: A search for consensus. Behav. Ther. 48, 128–138 (2017).

Niarchou, M. et al. Genome-wide association study of musical beat synchronization demonstrates high polygenicity. Nat. Hum. Behav. 6, 1292–1309 (2022).

Eising, E. et al. Genome-wide analyses of individual differences in quantitatively assessed reading- and language-related skills in up to 34,000 people. Proc. Natl. Acad. Sci. USA 119, e2202764119 (2022).

Wieland, E. A., McAuley, J. D., Dilley, L. C. & Chang, S.-E. Evidence for a rhythm perception deficit in children who stutter. Brain Lang. 144, 26–34 (2015).

Garnett, E. O. et al. Auditory rhythm discrimination in adults who stutter: An fMRI study. Brain Lang. 236, 105219 (2023).

Peretz, I. & Vuvan, D. T. Prevalence of congenital amusia. Eur. J. Hum. Genet. 25, 625–630 (2017).

Gershon, R. C. et al. IV. NIH Toolbox cognition battery (CB): Measuring language (vocabulary comprehension and reading decoding). Monogr. Soc. Res. Child Dev. 78, 49–69 (2013).

Ullén, F., Mosing, M. A., Holm, L., Eriksson, H. & Madison, G. Psychometric properties and heritability of a new online test for musicality, the Swedish Musical Discrimination Test. Pers. Individ. Dif. 63, 87–93 (2014).

Gustavson, D. E. et al. Exploring the genetics of rhythmic perception and musical engagement in the Vanderbilt Online Musicality Study. Ann. N. Y. Acad. Sci. 1521, 140–154 (2023).

Wesseldijk, L. W. et al. Using a polygenic score in a family design to understand genetic influences on musicality. Sci. Rep. 12, 14658 (2022).

Lense, M. D., Ladányi, E., Rabinowitch, T.-C., Trainor, L. & Gordon, R. Rhythm and timing as vulnerabilities in neurodevelopmental disorders. Philos. Trans. R. Soc. Lond. B Biol. Sci. 376, 20200327 (2021).

Colling, L. J., Noble, H. L. & Goswami, U. Neural entrainment and sensorimotor synchronization to the beat in children with developmental dyslexia: An EEG study. Front. Neurosci. 11, 360 (2017).

Goswami, U., Huss, M., Mead, N., Fosker, T. & Verney, J. P. Perception of patterns of musical beat distribution in phonological developmental dyslexia: significant longitudinal relations with word reading and reading comprehension. Cortex 49, 1363–1376 (2013).

Overy, K. Dyslexia and music. From timing deficits to musical intervention. Ann. N. Y. Acad. Sci. 999, 497–505 (2003).

Huss, M., Verney, J. P., Fosker, T., Mead, N. & Goswami, U. Music, rhythm, rise time perception and developmental dyslexia: perception of musical meter predicts reading and phonology. Cortex 47, 674–689 (2011).

Kotz, S. A., Ravignani, A. & Fitch, W. T. The evolution of rhythm processing. Trends Cogn. Sci. 22, 896–910 (2018).

Patel, A. D. Vocal learning as a preadaptation for the evolution of human beat perception and synchronization. Philos. Trans. R. Soc. Lond. B Biol. Sci. 376, 20200326 (2021).

Cannon, J. J. & Patel, A. D. How beat perception co-opts motor neurophysiology. Trends Cogn. Sci. 25, 137–150 (2021).

Gordon, R. L. et al. Linking the genomic signatures of human beat synchronization and learned song in birds. Philos. Trans. R. Soc. Lond. B Biol. Sci. 376, 20200329 (2021).

Healey, E. C., Mallard, A. R. 3rd & Adams, M. R. Factors contributing to the reduction of stuttering during singing. J. Speech Hear. Res. 19, 475–480 (1976).

Packman, A., Onslow, M. & Menzies, R. Novel speech patterns and the treatment of stuttering. Disabil. Rehabil. 22, 65–79 (2000).

Boorom, O., Nayak, S., Ladanyi, E., Magne, C. L. & Gordon, R. L. Music and developmental disorders of reading and spoken language. In The Oxford Handbook of Language and Music (ed. Sammler, D.) (online edn, Oxford Academic, 2025). https://doi.org/10.1093/oxfordhb/9780192894700.013.0030.

Fatumo, S. et al. A roadmap to increase diversity in genomic studies. Nat. Med. 28, 243–250 (2022).

Gordon, R. L. et al. Confronting ethical and social issues related to the genetics of musicality. Ann. N. Y. Acad. Sci. 1522, 5–14 (2023).

Jacoby, N. et al. Commonality and variation in mental representations of music revealed by a cross-cultural comparison of rhythm priors in 15 countries. Nat. Hum. Behav. 8, 846–877 (2024).

Nayak, S. et al. Test of Prosody via Syllable Emphasis (‘TOPsy’): Psychometric Validation of a Brief Scalable Test of Lexical Stress Perception. Front. Neurosci. 16, https://doi.org/10.3389/fnins.2022.765945 (2022).

Harris, P. A. et al. Research electronic data capture (REDCap)–a metadata-driven methodology and workflow process for providing translational research informatics support. J. Biomed. Inform. 42, 377–381 (2009).

Ge, T., Chen, C.-Y., Ni, Y., Feng, Y.-C. A. & Smoller, J. W. Polygenic prediction via Bayesian regression and continuous shrinkage priors. Nat. Commun. 10, 1776 (2019).

Macmillan, N. A. & Douglas Creelman, C. Detection Theory: A User’s Guide. (Psychology Press, 2004).

Grahn, J. A. & Brett, M. Rhythm and beat perception in motor areas of the brain. J. Cogn. Neurosci. 19, 893–906 (2007).

Nitin, R. et al. Exploring individual differences in musical rhythm and grammar skills in school-aged children with typically developing language. Sci. Rep. 13, 2201 (2023).

Anglada-Tort, M., Harrison, P. M. C. & Jacoby, N. REPP: A robust cross-platform solution for online sensorimotor synchronization experiments. Behav. Res. Methods 54, 2271–2285 (2022).

Garavan, H. et al. Recruiting the ABCD sample: Design considerations and procedures. Dev. Cogn. Neurosci. 32, 16–22 (2018).

Iacono, W. G. et al. The utility of twins in developmental cognitive neuroscience research: How twins strengthen the ABCD research design. Dev. Cogn. Neurosci. 32, 30–42 (2018).

Stolk, R. P. et al. Universal risk factors for multifactorial diseases: LifeLines: a three-generation population-based study. Eur. J. Epidemiol. 23, 67–74 (2008).

Sijtsma, A. et al. Cohort Profile Update: Lifelines, a three-generation cohort study and biobank. Int. J. Epidemiol. 51, e295–e302 (2022).

Newbury, D. F. et al. Using polygenic profiles to predict variation in language and psychosocial outcomes in early and middle childhood. J. Speech Lang. Hear. Res. 62, 3381–3396 (2019).

Walters, C. E. Jr et al. Automated phenotyping tool for identifying developmental language disorder cases in health systems data (APT-DLD): A new research algorithm for deployment in large-scale electronic health record systems. J. Speech Lang. Hear. Res. 63, 3019–3035 (2020).

Acknowledgements

Research reported was supported by the National Institutes on Deafness and Other Communication Disorders (NIDCD), the National Institute on Drug Abuse (NIDA) and the National Institute of General Medical Sciences (NIGMS) of the National Institutes of Health, under award numbers R01DC016977 to R.L.G.; R03DC021550 and R21DC021276 to S.N.; R01DA059804 to D.G.; and P20GM1023 supporting HSL. The content is solely the responsibility of the authors and does not necessarily represent the official views of the National Institutes of Health. The funders had no role in study design, data collection and analysis, decision to publish, or preparation of the manuscript. E.E., M.A.M., F.U. and S.E.F. acknowledge support of the Max Planck Society. E.E. is supported by a Veni grant of the Dutch Research Council (NWO; VI.Veni.202.072). The Lifelines initiative has been made possible by a subsidy from the Dutch Ministry of Health, Welfare and Sport, the Dutch Ministry of Economic Affairs, the University Medical Center Groningen (UMCG), Groningen University and the Provinces in the North of the Netherlands (Drenthe, Friesland, Groningen). The Authors wish to acknowledge the services of the Lifelines Cohort Study, the contributing research centers delivering data to Lifelines and all the study participants. Data used in the preparation of this article were obtained from the Adolescent Brain Cognitive Development (ABCD) Study (https://abcdstudy.org), held in the NIMH Data Archive (NDA). This is a multisite, longitudinal study designed to recruit more than 10,000 children aged 9-10 and follow them over 10 years into early adulthood. The ABCD Study® is supported by the National Institutes of Health and additional federal partners under award numbers U01DA041048, U01DA050989, U01DA051016, U01DA041022, U01DA051018, U01DA051037, U01DA050987, U01DA041174, U01DA041106, U01DA041117, U01DA041028, U01DA041134, U01DA050988, U01DA051039, U01DA041156, U01DA041025, U01DA041120, U01DA051038, U01DA041148, U01DA041093, U01DA041089, U24DA041123, U24DA041147. A full list of supporters is available at https://abcdstudy.org/federal-partners.html. A listing of participating sites and a complete listing of the study investigators can be found at https://abcdstudy.org/consortium_members/. ABCD consortium investigators designed and implemented the study and/or provided data, but did not necessarily participate in the analysis or writing of this report. This manuscript reflects the views of the authors and may not reflect the opinions or views of the NIH or ABCD consortium investigators. We acknowledge Grace Schlicht’s assistance with the preparation of data and code for sharing. We would also like to thank the employees of 23andMe, Inc., for making this work possible and all the research participants who contributed their data.

Author information

Authors and Affiliations

Contributions